How to End a Method and Then Call the Same Method Again

Threading in C#

Last updated: 2011-4-27

Translations: Chinese | Czech | Western farsi | Russian | Japanese

Download PDF

Office 2: Bones Synchronization

Synchronization Essentials

So far, we've described how to outset a task on a thread, configure a thread, and laissez passer information in both directions. Nosotros've as well described how local variables are private to a thread and how references can be shared amid threads, assuasive them to communicate via common fields.

The side by side step is synchronization: analogous the actions of threads for a predictable consequence. Synchronization is particularly of import when threads admission the same data; information technology's surprisingly easy to run aground in this area.

Synchronization constructs can be divided into four categories:

- Uncomplicated blocking methods

- These wait for another thread to finish or for a period of fourth dimension to elapse.

Sleep,Join, andTask.Lookare simple blocking methods. - Locking constructs

- These limit the number of threads that can perform some action or execute a section of code at a time. Exclusive locking constructs are most common — these let just ane thread in at a time, and allow competing threads to access common data without interfering with each other. The standard exclusive locking constructs are

lock(Monitor.Enter/Monitor.Get out),Mutex, and SpinLock. The nonexclusive locking constructs areSemaphore,SemaphoreSlim, and the reader/writer locks. - Signaling constructs

- These allow a thread to pause until receiving a notification from another, avoiding the demand for inefficient polling. There are two commonly used signaling devices: event look handles and

Monitor'sWait/Pulsemethods. Framework 4.0 introduces theCountdownEventandBulwarkclasses. - Nonblocking synchronization constructs

- These protect access to a common field by calling upon processor primitives. The CLR and C# provide the following nonblocking constructs:

Thread.MemoryBarrier, Thread.VolatileRead, Thread.VolatileWrite, thevolatilekeyword, and theInterlockedcourse.

Blocking is essential to all but the final category. Allow's briefly examine this concept.

Blocking

A thread is deemed blocked when its execution is paused for some reason, such equally when Sleeping or waiting for another to end via Bring together or EndInvoke. A blocked thread immediately yields its processor time slice, and from then on consumes no processor time until its blocking condition is satisfied. Y'all tin exam for a thread being blocked via its ThreadState belongings:

bool blocked = (someThread.ThreadState & ThreadState.WaitSleepJoin) != 0;

More than than the coolest LINQ tool

LINQPad is now the ultimate

C# scratchpad!

Interactive development in a standalone executable!

Written by the author of this article

Free

(Given that a thread'due south state may modify in between testing its state and then acting upon that information, this code is useful merely in diagnostic scenarios.)

When a thread blocks or unblocks, the operating system performs a context switch. This incurs an overhead of a few microseconds.

Unblocking happens in one of 4 means (the calculator'due south power push doesn't count!):

- by the blocking status beingness satisfied

- by the operation timing out (if a timeout is specified)

- past being interrupted via Thread.Interrupt

- past being aborted via Thread.Abort

A thread is non deemed blocked if its execution is paused via the (deprecated) Append method.

Blocking Versus Spinning

Sometimes a thread must intermission until a sure condition is met. Signaling and locking constructs achieve this efficiently by blocking until a condition is satisfied. Nonetheless, there is a simpler alternative: a thread tin can await a condition by spinning in a polling loop. For example:

while (!proceed);

or:

while (DateTime.Now < nextStartTime);

In general, this is very wasteful on processor time: as far as the CLR and operating system are concerned, the thread is performing an important calculation, and so gets allocated resources appropriately!

Sometimes a hybrid between blocking and spinning is used:

while (!continue) Thread.Sleep (x);

Although inelegant, this is (in general) far more than efficient than outright spinning. Problems can arise, though, due to concurrency issues on the go on flag. Proper utilise of locking and signaling avoids this.

Spinning very briefly tin be effective when you expect a condition to be satisfied presently (perhaps within a few microseconds) because it avoids the overhead and latency of a context switch. The .Net Framework provides special methods and classes to help — these are covered in the parallel programming section.

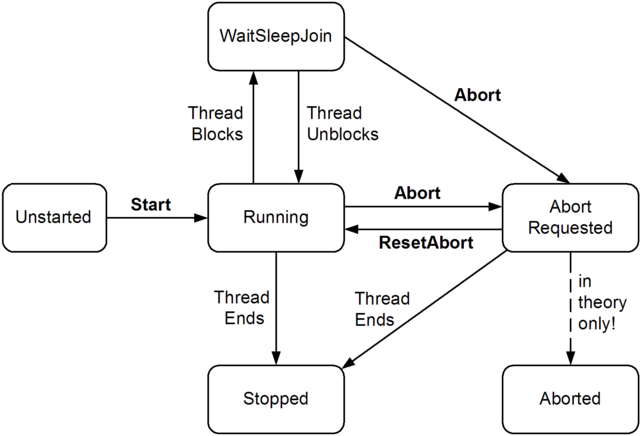

ThreadState

You tin can query a thread'south execution status via its ThreadState property. This returns a flags enum of type ThreadState, which combines three "layers" of information in a bitwise way. Most values, however, are redundant, unused, or deprecated. The following diagram shows one "layer":

The following lawmaking strips a ThreadState to one of the four most useful values: Unstarted, Running, WaitSleepJoin, and Stopped:

public static ThreadState SimpleThreadState (ThreadState ts) { return ts & (ThreadState.Unstarted | ThreadState.WaitSleepJoin | ThreadState.Stopped); } The ThreadState property is useful for diagnostic purposes, but unsuitable for synchronization, because a thread'due south country may change in betwixt testing ThreadState and acting on that information.

Locking

Sectional locking is used to ensure that only one thread tin enter particular sections of code at a time. The ii main sectional locking constructs are lock and Mutex. Of the two, the lock construct is faster and more convenient. Mutex, though, has a niche in that its lock tin can bridge applications in dissimilar processes on the computer.

In this section, we'll get-go with the lock construct and then move on to Mutex and semaphores (for nonexclusive locking). Later, we'll cover reader/writer locks.

From Framework iv.0, there is also the SpinLock struct for high-concurrency scenarios.

Permit'southward starting time with the following form:

form ThreadUnsafe { static int _val1 = 1, _val2 = 1; static void Become() { if (_val2 != 0) Console.WriteLine (_val1 / _val2); _val2 = 0; } } This class is not thread-safety: if Go was called past two threads simultaneously, it would be possible to get a division-by-zero error, because _val2 could be ready to cypher in one thread right as the other thread was in between executing the if statement and Console.WriteLine.

Here'due south how lock can fix the trouble:

course ThreadSafe { static readonly object _locker = new object(); static int _val1, _val2; static void Go() { lock (_locker) { if (_val2 != 0) Console.WriteLine (_val1 / _val2); _val2 = 0; } } } But one thread can lock the synchronizing object (in this case, _locker) at a time, and whatsoever contending threads are blocked until the lock is released. If more than one thread contends the lock, they are queued on a "ready queue" and granted the lock on a showtime-come, offset-served footing (a caveat is that nuances in the behavior of Windows and the CLR mean that the fairness of the queue tin can sometimes be violated). Exclusive locks are sometimes said to enforce serialized access to whatsoever's protected by the lock, because i thread'south access cannot overlap with that of some other. In this example, we're protecting the logic within the Become method, equally well as the fields _val1 and _val2.

A thread blocked while awaiting a contended lock has a ThreadState of WaitSleepJoin. In Interrupt and Abort, nosotros describe how a blocked thread tin can be forcibly released via another thread. This is a fairly heavy-duty technique that might exist used in ending a thread.

Monitor.Enter and Monitor.Leave

C#'southward lock argument is in fact a syntactic shortcut for a call to the methods Monitor.Enter and Monitor.Leave, with a effort/finally cake. Here'south (a simplified version of) what'south actually happening within the Become method of the preceding example:

Monitor.Enter (_locker); try { if (_val2 != 0) Console.WriteLine (_val1 / _val2); _val2 = 0; } finally { Monitor.Go out (_locker); } Calling Monitor.Exit without outset calling Monitor.Enter on the same object throws an exception.

The lockTaken overloads

The code that we merely demonstrated is exactly what the C# one.0, 2.0, and 3.0 compilers produce in translating a lock argument.

There'southward a subtle vulnerability in this code, however. Consider the (unlikely) consequence of an exception being thrown within the implementation of Monitor.Enter, or between the call to Monitor.Enter and the try block (due, perchance, to Abort existence called on that thread — or an OutOfMemoryException beingness thrown). In such a scenario, the lock may or may not be taken. If the lock is taken, it won't be released — because we'll never enter the endeavor/finally cake. This will issue in a leaked lock.

To avoid this danger, CLR 4.0'southward designers added the following overload to Monitor.Enter:

public static void Enter (object obj, ref bool lockTaken);

lockTaken is imitation afterward this method if (and only if) the Enter method throws an exception and the lock was not taken.

Here's the correct pattern of use (which is exactly how C# 4.0 translates a lock argument):

bool lockTaken = faux; try { Monitor.Enter (_locker, ref lockTaken); // Do your stuff... } finally { if (lockTaken) Monitor.Get out (_locker); } TryEnter

Monitor also provides a TryEnter method that allows a timeout to exist specified, either in milliseconds or as a TimeSpan. The method then returns true if a lock was obtained, or imitation if no lock was obtained because the method timed out. TryEnter can besides be called with no argument, which "tests" the lock, timing out immediately if the lock can't be obtained right away.

Every bit with the Enter method, it's overloaded in CLR 4.0 to have a lockTaken argument.

Choosing the Synchronization Object

Any object visible to each of the partaking threads can be used as a synchronizing object, subject field to one hard rule: information technology must be a reference type. The synchronizing object is typically individual (because this helps to encapsulate the locking logic) and is typically an instance or static field. The synchronizing object can double as the object it'due south protecting, every bit the _list field does in the following case:

course ThreadSafe { List <string> _list = new Listing <string>(); void Examination() { lock (_list) { _list.Add ("Particular 1"); ... A field dedicated for the purpose of locking (such every bit _locker, in the instance prior) allows precise control over the telescopic and granularity of the lock. The containing object (this) — or even its type — can also be used every bit a synchronization object:

lock (this) { ... } or:

lock (typeof (Widget)) { ... } // For protecting access to statics The disadvantage of locking in this way is that yous're non encapsulating the locking logic, so it becomes harder to prevent deadlocking and excessive blocking. A lock on a type may also seep through application domain boundaries (within the aforementioned process).

You lot can also lock on local variables captured past lambda expressions or bearding methods.

Locking doesn't restrict access to the synchronizing object itself in any way. In other words, ten.ToString() will not block because another thread has called lock(10); both threads must telephone call lock(ten) in social club for blocking to occur.

When to Lock

As a basic rule, yous need to lock around accessing any writable shared field. Even in the simplest case — an assignment operation on a unmarried field — you must consider synchronization. In the post-obit class, neither the Increment nor the Assign method is thread-safe:

class ThreadUnsafe { static int _x; static void Increment() { _x++; } static void Assign() { _x = 123; } } Here are thread-safe versions of Increment and Assign:

class ThreadSafe { static readonly object _locker = new object(); static int _x; static void Increment() { lock (_locker) _x++; } static void Assign() { lock (_locker) _x = 123; } } In Nonblocking Synchronization, we explain how this demand arises, and how the retention barriers and the Interlocked class can provide alternatives to locking in these situations.

Locking and Atomicity

If a group of variables are always read and written inside the same lock, yous can say the variables are read and written atomically. Let's suppose fields x and y are always read and assigned within a lock on object locker:

lock (locker) { if (x != 0) y /= ten; } One can say x and y are accessed atomically, because the code block cannot be divided or preempted past the actions of another thread in such a fashion that it will alter x or y and invalidate its outcome. You'll never get a division-by-nothing mistake, providing ten and y are always accessed within this same exclusive lock.

The atomicity provided by a lock is violated if an exception is thrown within a lock block. For example, consider the following:

decimal _savingsBalance, _checkBalance; void Transfer (decimal amount) { lock (_locker) { _savingsBalance += amount; _checkBalance -= amount + GetBankFee(); } } If an exception was thrown by GetBankFee(), the bank would lose money. In this case, we could avoid the trouble by calling GetBankFee earlier. A solution for more than complex cases is to implement "rollback" logic within a take hold of or finally cake.

Instruction atomicity is a dissimilar, although analogous concept: an instruction is atomic if it executes indivisibly on the underlying processor (see Nonblocking Synchronization).

Nested Locking

A thread can repeatedly lock the aforementioned object in a nested (reentrant) style:

lock (locker) lock (locker) lock (locker) { // Exercise something... } or:

Monitor.Enter (locker); Monitor.Enter (locker); Monitor.Enter (locker); // Do something... Monitor.Exit (locker); Monitor.Exit (locker); Monitor.Exit (locker);

In these scenarios, the object is unlocked but when the outermost lock statement has exited — or a matching number of Monitor.Exit statements have executed.

Nested locking is useful when one method calls some other within a lock:

static readonly object _locker = new object(); static void Primary() { lock (_locker) { AnotherMethod(); // We however accept the lock - because locks are reentrant. } } static void AnotherMethod() { lock (_locker) { Panel.WriteLine ("Another method"); } } A thread tin can block on merely the first (outermost) lock.

Deadlocks

A deadlock happens when two threads each expect for a resource held past the other, so neither can go along. The easiest way to illustrate this is with ii locks:

object locker1 = new object(); object locker2 = new object(); new Thread (() => { lock (locker1) { Thread.Sleep (grand); lock (locker2); // Deadlock } }).Start(); lock (locker2) { Thread.Sleep (yard); lock (locker1); // Deadlock } More elaborate deadlocking chains tin can be created with three or more threads.

The CLR, in a standard hosting environment, is not similar SQL Server and does not automatically detect and resolve deadlocks by terminating one of the offenders. A threading deadlock causes participating threads to cake indefinitely, unless y'all've specified a locking timeout. (Under the SQL CLR integration host, all the same, deadlocks are automatically detected and a [catchable] exception is thrown on one of the threads.)

Deadlocking is one of the hardest problems in multithreading — especially when there are many interrelated objects. Fundamentally, the hard problem is that you can't exist certain what locks your caller has taken out.

So, you might innocently lock private field a within your class 10, unaware that your caller (or caller's caller) has already locked field b within class y. Meanwhile, some other thread is doing the reverse — creating a deadlock. Ironically, the problem is exacerbated by (practiced) object-oriented design patterns, considering such patterns create call chains that are not adamant until runtime.

The popular communication, "lock objects in a consistent order to avoid deadlocks," although helpful in our initial example, is hard to apply to the scenario just described. A improve strategy is to exist wary of locking around calling methods in objects that may have references back to your own object. Likewise, consider whether you lot really need to lock around calling methods in other classes (often you practise — as we'll see later — but sometimes there are other options). Relying more on declarative and information parallelism, immutable types, and nonblocking synchronization constructs, tin can lessen the need for locking.

Hither is an alternative way to perceive the problem: when you call out to other code while holding a lock, the encapsulation of that lock subtly leaks. This is non a fault in the CLR or .Net Framework, but a fundamental limitation of locking in general. The problems of locking are being addressed in various research projects, including Software Transactional Retentiveness.

Some other deadlocking scenario arises when calling Dispatcher.Invoke (in a WPF awarding) or Control.Invoke (in a Windows Forms application) while in possession of a lock. If the UI happens to be running another method that's waiting on the same lock, a deadlock will happen right there. This tin can oft exist fixed simply by calling BeginInvoke instead of Invoke. Alternatively, you can release your lock before calling Invoke, although this won't work if your caller took out the lock. We explain Invoke and BeginInvoke in Rich Client Applications and Thread Affinity.

Performance

Locking is fast: y'all can await to acquire and release a lock in as little as 20 nanoseconds on a 2010-era calculator if the lock is uncontended. If it is contended, the consequential context switch moves the overhead closer to the microsecond region, although it may be longer before the thread is actually rescheduled. You can avoid the cost of a context switch with the SpinLock form — if you're locking very briefly.

Locking can degrade concurrency if locks are held for besides long. This tin as well increase the take a chance of deadlock.

Mutex

A Mutex is like a C# lock, merely it can piece of work across multiple processes. In other words, Mutex tin can be computer-wide as well as application-wide.

Acquiring and releasing an uncontended Mutex takes a few microseconds — about fifty times slower than a lock.

With a Mutex course, you call the WaitOne method to lock and ReleaseMutex to unlock. Closing or disposing a Mutex automatically releases it. Just as with the lock statement, a Mutex can be released only from the aforementioned thread that obtained it.

A common use for a cross-process Mutex is to ensure that but one instance of a programme tin can run at a time. Here's how it'south done:

class OneAtATimePlease { static void Main() { // Naming a Mutex makes it available estimator-wide. Use a name that's // unique to your company and application (e.chiliad., include your URL). using (var mutex = new Mutex (false, "oreilly.com OneAtATimeDemo")) { // Wait a few seconds if contended, in example another example // of the program is still in the process of shutting downward. if (!mutex.WaitOne (TimeSpan.FromSeconds (3), false)) { Console.WriteLine ("Another app instance is running. Bye!"); render; } RunProgram(); } } static void RunProgram() { Console.WriteLine ("Running. Press Enter to exit"); Console.ReadLine(); } } If running nether Last Services, a calculator-wide Mutex is ordinarily visible only to applications in the same terminal server session. To make it visible to all concluding server sessions, prefix its name with Global\.

Semaphore

A semaphore is like a nightclub: information technology has a certain capacity, enforced by a bouncer. One time it'due south full, no more people can enter, and a queue builds up outside. Then, for each person that leaves, one person enters from the head of the queue. The constructor requires a minimum of 2 arguments: the number of places currently available in the nightclub and the club'southward total chapters.

A semaphore with a capacity of one is similar to a Mutex or lock, except that the semaphore has no "owner" — information technology's thread-agnostic. Any thread can call Release on a Semaphore, whereas with Mutex and lock, only the thread that obtained the lock can release it.

There are two functionally like versions of this form: Semaphore and SemaphoreSlim. The latter was introduced in Framework four.0 and has been optimized to see the low-latency demands of parallel programming. It'south as well useful in traditional multithreading because it lets you lot specify a cancellation token when waiting. It cannot, still, be used for interprocess signaling.

Semaphore incurs nearly 1 microsecond in calling WaitOne or Release; SemaphoreSlim incurs nearly a quarter of that.

Semaphores can be useful in limiting concurrency — preventing too many threads from executing a particular piece of code at once. In the following example, five threads attempt to enter a nightclub that allows merely three threads in at one time:

class TheClub // No door lists! { static SemaphoreSlim _sem = new SemaphoreSlim (iii); // Capacity of iii static void Main() { for (int i = i; i <= v; i++) new Thread (Enter).Showtime (i); } static void Enter (object id) { Console.WriteLine (id + " wants to enter"); _sem.Expect(); Console.WriteLine (id + " is in!"); // Just three threads Thread.Sleep (g * (int) id); // can be here at Panel.WriteLine (id + " is leaving"); // a fourth dimension. _sem.Release(); } } 1 wants to enter 1 is in! 2 wants to enter ii is in! 3 wants to enter three is in! four wants to enter 5 wants to enter 1 is leaving iv is in! ii is leaving 5 is in!

If the Sleep statement was instead performing intensive deejay I/O, the Semaphore would better overall performance by limiting excessive concurrent hard-drive action.

A Semaphore, if named, can span processes in the same manner equally a Mutex.

Thread Safety

By the same author:

A program or method is thread-prophylactic if it has no indeterminacy in the face up of any multithreading scenario. Thread safety is achieved primarily with locking and by reducing the possibilities for thread interaction.

General-purpose types are rarely thread-rubber in their entirety, for the following reasons:

- The development brunt in full thread safety tin can be significant, particularly if a type has many fields (each field is a potential for interaction in an arbitrarily multithreaded context).

- Thread safety can entail a performance cost (payable, in office, whether or not the blazon is really used by multiple threads).

- A thread-safe type does non necessarily make the program using it thread-safe, and often the work involved in the latter makes the one-time redundant.

Thread safety is hence usually implemented just where it needs to be, in order to handle a specific multithreading scenario.

There are, however, a few ways to "cheat" and take big and complex classes run safely in a multithreaded surroundings. One is to sacrifice granularity by wrapping large sections of code — fifty-fifty access to an unabridged object — within a single exclusive lock, enforcing serialized access at a loftier level. This tactic is, in fact, essential if you desire to use thread-unsafe third-party lawmaking (or most Framework types, for that matter) in a multithreaded context. The trick is merely to use the aforementioned sectional lock to protect access to all properties, methods, and fields on the thread-unsafe object. The solution works well if the object'due south methods all execute quickly (otherwise, there will be a lot of blocking).

Primitive types aside, few .NET Framework types, when instantiated, are thread-prophylactic for anything more than concurrent read-only access. The onus is on the developer to superimpose thread safety, typically with exclusive locks. (The collections in System.Collections.Concurrent are an exception.)

Another way to crook is to minimize thread interaction by minimizing shared information. This is an excellent arroyo and is used implicitly in "stateless" eye-tier application and web folio servers. Since multiple client requests can get in simultaneously, the server methods they call must exist thread-safety. A stateless blueprint (popular for reasons of scalability) intrinsically limits the possibility of interaction, since classes do not persist data between requests. Thread interaction is then express just to the static fields one may choose to create, for such purposes as caching commonly used data in retentivity and in providing infrastructure services such as authentication and auditing.

The final approach in implementing thread safe is to use an automatic locking regime. The .Cyberspace Framework does exactly this, if you subclass ContextBoundObject and apply the Synchronization attribute to the class. Whenever a method or property on such an object is and so called, an object-wide lock is automatically taken for the whole execution of the method or property. Although this reduces the thread-condom burden, it creates issues of its own: deadlocks that would not otherwise occur, impoverished concurrency, and unintended reentrancy. For these reasons, manual locking is generally a better option — at least until a less simplistic automated locking regime becomes available.

Thread Safety and .Internet Framework Types

Locking can be used to catechumen thread-unsafe code into thread-safe code. A good awarding of this is the .Internet Framework: nearly all of its nonprimitive types are not thread-condom (for anything more than read-simply admission) when instantiated, and nevertheless they can be used in multithreaded code if all access to any given object is protected via a lock. Hither's an example, where 2 threads simultaneously add an item to the aforementioned Listing collection, and so enumerate the list:

class ThreadSafe { static List <cord> _list = new List <cord>(); static void Main() { new Thread (AddItem).Start(); new Thread (AddItem).Start(); } static void AddItem() { lock (_list) _list.Add together ("Particular " + _list.Count); cord[] items; lock (_list) items = _list.ToArray(); foreach (string due south in items) Console.WriteLine (south); } } In this example, we're locking on the _list object itself. If we had 2 interrelated lists, we would have to choose a common object upon which to lock (we could nominate one of the lists, or meliorate: utilise an independent field).

Enumerating .Internet collections is also thread-unsafe in the sense that an exception is thrown if the list is modified during enumeration. Rather than locking for the duration of enumeration, in this instance nosotros first copy the items to an assortment. This avoids holding the lock excessively if what we're doing during enumeration is potentially fourth dimension-consuming. (Some other solution is to use a reader/author lock.)

Locking effectually thread-rubber objects

Sometimes y'all as well demand to lock around accessing thread-safe objects. To illustrate, imagine that the Framework'south List class was, indeed, thread-condom, and we want to add an particular to a list:

if (!_list.Contains (newItem)) _list.Add together (newItem);

Whether or not the list was thread-safe, this statement is certainly not! The whole if statement would have to be wrapped in a lock in order to prevent preemption in betwixt testing for containership and adding the new detail. This aforementioned lock would so need to be used everywhere we modified that listing. For instance, the post-obit statement would also need to exist wrapped in the identical lock:

_list.Clear();

to ensure that it did non preempt the one-time statement. In other words, we would take to lock exactly as with our thread-unsafe collection classes (making the List class'south hypothetical thread condom redundant).

Locking effectually accessing a collection tin cause excessive blocking in highly concurrent environments. To this end, Framework four.0 provides a thread-condom queue, stack, and lexicon.

Static members

Wrapping access to an object effectually a custom lock works only if all concurrent threads are aware of — and utilise — the lock. This may not exist the example if the object is widely scoped. The worst example is with static members in a public blazon. For instance, imagine if the static property on the DateTime struct, DateTime.Now, was non thread-safe, and that ii concurrent calls could result in garbled output or an exception. The only mode to remedy this with external locking might be to lock the type itself — lock(typeof(DateTime)) — earlier calling DateTime.Now. This would work but if all programmers agreed to do this (which is unlikely). Furthermore, locking a blazon creates issues of its own.

For this reason, static members on the DateTime struct take been carefully programmed to exist thread-safe. This is a common pattern throughout the .NET Framework: static members are thread-condom; instance members are not. Following this pattern also makes sense when writing types for public consumption, and so as not to create incommunicable thread-safety conundrums. In other words, past making static methods thread-safety, you're programming and then as non to forbid thread condom for consumers of that type.

Thread safety in static methods is something that you must explicitly code: it doesn't happen automatically by virtue of the method being static!

Read-just thread prophylactic

Making types thread-safe for concurrent read-but access (where possible) is advantageous considering it means that consumers can avoid excessive locking. Many of the .Net Framework types follow this principle: collections, for instance, are thread-safety for concurrent readers.

Following this principle yourself is simple: if you document a type as being thread-safe for concurrent read-only access, don't write to fields within methods that a consumer would wait to be read-merely (or lock around doing and so). For instance, in implementing a ToArray() method in a collection, you might start by compacting the collection's internal structure. However, this would make it thread-unsafe for consumers that expected this to be read-just.

Read-only thread rubber is i of the reasons that enumerators are separate from "enumerables": two threads tin can simultaneously enumerate over a collection because each gets a separate enumerator object.

In the absence of documentation, it pays to be cautious in assuming whether a method is read-but in nature. A good example is the Random class: when you call Random.Next(), its internal implementation requires that it update individual seed values. Therefore, you must either lock around using the Random grade, or maintain a divide case per thread.

Thread Safe in Awarding Servers

Application servers need to be multithreaded to handle simultaneous client requests. WCF, ASP.Internet, and Web Services applications are implicitly multithreaded; the same holds true for Remoting server applications that utilize a network channel such equally TCP or HTTP. This ways that when writing code on the server side, you must consider thread condom if there's whatsoever possibility of interaction among the threads processing customer requests. Fortunately, such a possibility is rare; a typical server class is either stateless (no fields) or has an activation model that creates a separate object instance for each client or each asking. Interaction usually arises only through static fields, sometimes used for caching in memory parts of a database to ameliorate performance.

For example, suppose you have a RetrieveUser method that queries a database:

// User is a custom grade with fields for user data internal User RetrieveUser (int id) { ... } If this method was called frequently, y'all could meliorate performance by caching the results in a static Dictionary. Here's a solution that takes thread rubber into account:

static class UserCache { static Dictionary <int, User> _users = new Dictionary <int, User>(); internal static User GetUser (int id) { User u = null; lock (_users) if (_users.TryGetValue (id, out u)) render u; u = RetrieveUser (id); // Method to retrieve user from database lock (_users) _users [id] = u; return u; } } We must, at a minimum, lock around reading and updating the dictionary to ensure thread safety. In this case, nosotros cull a practical compromise betwixt simplicity and performance in locking. Our blueprint actually creates a very modest potential for inefficiency: if two threads simultaneously chosen this method with the same previously unretrieved id, the RetrieveUser method would be called twice — and the lexicon would be updated unnecessarily. Locking once across the whole method would forbid this, only would create a worse inefficiency: the entire cache would be locked up for the duration of calling RetrieveUser, during which time other threads would be blocked in retrieving whatsoever user.

Rich Client Applications and Thread Affinity

Both the Windows Presentation Foundation (WPF) and Windows Forms libraries follow models based on thread affinity. Although each has a carve up implementation, they are both very similar in how they function.

The objects that brand upwards a rich client are based primarily on DependencyObject in the instance of WPF, or Command in the case of Windows Forms. These objects accept thread affinity, which means that but the thread that instantiates them can after admission their members. Violating this causes either unpredictable behavior, or an exception to be thrown.

On the positive side, this means y'all don't need to lock effectually accessing a UI object. On the negative side, if y'all want to call a member on object X created on another thread Y, you must marshal the request to thread Y. You can do this explicitly every bit follows:

- In WPF, call

InvokeorBeginInvokeon the element'sDispatcherobject. - In Windows Forms, call

InvokeorBeginInvokeon the control.

Invoke and BeginInvoke both accept a delegate, which references the method on the target command that you lot desire to run. Invoke works synchronously: the caller blocks until the marshal is complete. BeginInvoke works asynchronously: the caller returns immediately and the marshaled request is queued upwards (using the same bulletin queue that handles keyboard, mouse, and timer events).

Bold nosotros have a window that contains a text box called txtMessage, whose content we wish a worker thread to update, here's an example for WPF:

public partial course MyWindow : Window { public MyWindow() { InitializeComponent(); new Thread (Work).Outset(); } void Work() { Thread.Sleep (5000); // Simulate time-consuming task UpdateMessage ("The answer"); } void UpdateMessage (string message) { Action action = () => txtMessage.Text = bulletin; Dispatcher.Invoke (activeness); } } The lawmaking is similar for Windows Forms, except that nosotros telephone call the (Form'due south) Invoke method instead:

void UpdateMessage (cord message) { Activity activeness = () => txtMessage.Text = message; this.Invoke (action); } Worker threads versus UI threads

It's helpful to think of a rich client application as having two singled-out categories of threads: UI threads and worker threads. UI threads instantiate (and subsequently "own") UI elements; worker threads exercise not. Worker threads typically execute long-running tasks such every bit fetching data.

Most rich client applications take a single UI thread (which is likewise the chief awarding thread) and periodically spawn worker threads — either directly or using BackgroundWorker. These workers then marshal back to the chief UI thread in order to update controls or study on progress.

And so, when would an application accept multiple UI threads? The main scenario is when you have an application with multiple top-level windows, often chosen a Single Document Interface (SDI) application, such as Microsoft Word. Each SDI window typically shows itself every bit a separate "application" on the taskbar and is mostly isolated, functionally, from other SDI windows. Past giving each such window its ain UI thread, the application tin can be fabricated more responsive.

Immutable Objects

An immutable object is one whose state cannot be altered — externally or internally. The fields in an immutable object are typically declared read-only and are fully initialized during structure.

Immutability is a authentication of functional programming — where instead of mutating an object, yous create a new object with different backdrop. LINQ follows this paradigm. Immutability is also valuable in multithreading in that it avoids the problem of shared writable state — by eliminating (or minimizing) the writable.

One design is to use immutable objects to encapsulate a group of related fields, to minimize lock durations. To accept a very elementary example, suppose nosotros had 2 fields as follows:

int _percentComplete; string _statusMessage;

and we wanted to read/write them atomically. Rather than locking around these fields, nosotros could define the following immutable class:

form ProgressStatus // Represents progress of some activity { public readonly int PercentComplete; public readonly string StatusMessage; // This course might have many more fields... public ProgressStatus (int percentComplete, string statusMessage) { PercentComplete = percentComplete; StatusMessage = statusMessage; } } And so we could define a unmarried field of that blazon, forth with a locking object:

readonly object _statusLocker = new object(); ProgressStatus _status;

We can now read/write values of that type without belongings a lock for more than a single consignment:

var status = new ProgressStatus (50, "Working on information technology"); // Imagine nosotros were assigning many more fields... // ... lock (_statusLocker) _status = status; // Very brief lock

To read the object, we first obtain a re-create of the object (inside a lock). And then we can read its values without needing to hold on to the lock:

ProgressStatus statusCopy; lock (_locker ProgressStatus) statusCopy = _status; // Again, a brief lock int pc = statusCopy.PercentComplete; string msg = statusCopy.StatusMessage; ...

Technically, the last two lines of code are thread-safe by virtue of the preceding lock performing an implicit memory barrier (meet part 4).

Note that this lock-free approach prevents inconsistency within a group of related fields. Just it doesn't prevent data from changing while you subsequently human action on it — for this, you usually need a lock. In Part five, we'll see more examples of using immutability to simplify multithreading — including PLINQ.

It's also possible to safely assign a new ProgressStatus object based on its preceding value (due east.g., it's possible to "increment" the PercentComplete value) — without locking over more than i line of lawmaking. In fact, nosotros can practise this without using a unmarried lock, through the use of explicit memory barriers, Interlocked.CompareExchange, and spin-waits. This is an avant-garde technique which nosotros draw in subsequently in the parallel programming section.

Signaling with Event Wait Handles

Upshot await handles are used for signaling. Signaling is when one thread waits until it receives notification from another. Event wait handles are the simplest of the signaling constructs, and they are unrelated to C# events. They come in three flavors: AutoResetEvent, ManualResetEvent, and (from Framework 4.0) CountdownEvent. The erstwhile two are based on the mutual EventWaitHandle class, where they derive all their functionality.

AutoResetEvent

An AutoResetEvent is like a ticket turnstile: inserting a ticket lets exactly 1 person through. The "automobile" in the class's name refers to the fact that an open up turnstile automatically closes or "resets" after someone steps through. A thread waits, or blocks, at the turnstile by calling WaitOne (wait at this "one" turnstile until information technology opens), and a ticket is inserted past calling the Set method. If a number of threads call WaitOne, a queue builds upwards behind the turnstile. (As with locks, the fairness of the queue can sometimes be violated due to nuances in the operating system). A ticket can come up from any thread; in other words, any (unblocked) thread with access to the AutoResetEvent object tin call Set on it to release one blocked thread.

Yous can create an AutoResetEvent in ii ways. The offset is via its constructor:

var auto = new AutoResetEvent (false);

(Passing true into the constructor is equivalent to immediately calling Set upon it.) The second manner to create an AutoResetEvent is equally follows:

var car = new EventWaitHandle (false, EventResetMode.AutoReset);

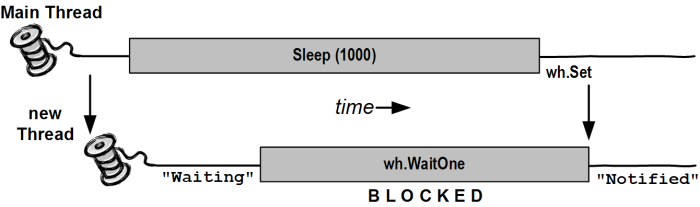

In the following example, a thread is started whose job is simply to wait until signaled by another thread:

course BasicWaitHandle { static EventWaitHandle _waitHandle = new AutoResetEvent (fake); static void Main() { new Thread (Waiter).Showtime(); Thread.Sleep (1000); // Pause for a 2nd... _waitHandle.Fix(); // Wake up the Waiter. } static void Waiter() { Panel.WriteLine ("Waiting..."); _waitHandle.WaitOne(); // Wait for notification Console.WriteLine ("Notified"); } } Waiting... (pause) Notified.

If Set is chosen when no thread is waiting, the handle stays open for as long equally it takes until some thread calls WaitOne. This behavior helps avoid a race between a thread heading for the turnstile, and a thread inserting a ticket ("Oops, inserted the ticket a microsecond too soon, bad luck, now you'll accept to await indefinitely!"). However, calling Set repeatedly on a turnstile at which no ane is waiting doesn't let a whole party through when they arrive: merely the side by side single person is let through and the extra tickets are "wasted."

Calling Reset on an AutoResetEvent closes the turnstile (should it be open) without waiting or blocking.

WaitOne accepts an optional timeout parameter, returning false if the wait ended considering of a timeout rather than obtaining the betoken.

Calling WaitOne with a timeout of 0 tests whether a wait handle is "open," without blocking the caller. Bear in mind, though, that doing this resets the AutoResetEvent if it's open up.

Two-way signaling

Let's say we want the main thread to point a worker thread three times in a row. If the primary thread simply calls Gear up on a wait handle several times in rapid succession, the second or 3rd signal may become lost, since the worker may take time to process each signal.

The solution is for the main thread to expect until the worker's set before signaling information technology. This tin be done with another AutoResetEvent, equally follows:

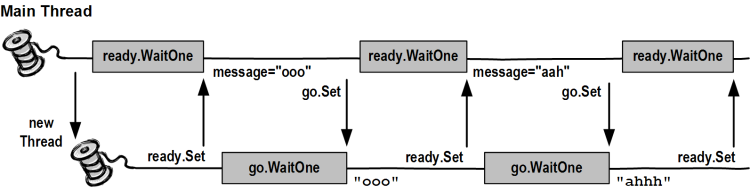

class TwoWaySignaling { static EventWaitHandle _ready = new AutoResetEvent (false); static EventWaitHandle _go = new AutoResetEvent (false); static readonly object _locker = new object(); static cord _message; static void Main() { new Thread (Piece of work).Get-go(); _ready.WaitOne(); // First wait until worker is ready lock (_locker) _message = "ooo"; _go.Prepare(); // Tell worker to go _ready.WaitOne(); lock (_locker) _message = "ahhh"; // Give the worker some other bulletin _go.Gear up(); _ready.WaitOne(); lock (_locker) _message = null; // Signal the worker to exit _go.Set(); } static void Work() { while (true) { _ready.Set(); // Indicate that we're fix _go.WaitOne(); // Wait to exist kicked off... lock (_locker) { if (_message == nada) return; // Gracefully exit Console.WriteLine (_message); } } } } ooo ahhh

Here, nosotros're using a null message to indicate that the worker should end. With threads that run indefinitely, it'due south important to have an exit strategy!

Producer/consumer queue

A producer/consumer queue is a common requirement in threading. Here'due south how it works:

- A queue is set up up to describe work items — or data upon which work is performed.

- When a task needs executing, it's enqueued, allowing the caller to get on with other things.

- One or more worker threads plug away in the background, picking off and executing queued items.

The advantage of this model is that you lot have precise control over how many worker threads execute at once. This can allow you to limit consumption of not just CPU time, but other resources also. If the tasks perform intensive disk I/O, for case, you might have just one worker thread to avoid starving the operating organization and other applications. Another type of application may have xx. You can as well dynamically add and remove workers throughout the queue's life. The CLR's thread puddle itself is a kind of producer/consumer queue.

A producer/consumer queue typically holds items of information upon which (the same) task is performed. For example, the items of data may be filenames, and the task might exist to encrypt those files.

In the instance below, we utilise a single AutoResetEvent to point a worker, which waits when it runs out of tasks (in other words, when the queue is empty). We cease the worker by enqueing a null task:

using System; using System.Threading; using Arrangement.Collections.Generic; grade ProducerConsumerQueue : IDisposable { EventWaitHandle _wh = new AutoResetEvent (false); Thread _worker; readonly object _locker = new object(); Queue<string> _tasks = new Queue<string>(); public ProducerConsumerQueue() { _worker = new Thread (Piece of work); _worker.Start(); } public void EnqueueTask (string chore) { lock (_locker) _tasks.Enqueue (task); _wh.Fix(); } public void Dispose() { EnqueueTask (naught); // Betoken the consumer to exit. _worker.Bring together(); // Wait for the consumer'due south thread to finish. _wh.Shut(); // Release whatsoever Bone resource. } void Work() { while (truthful) { string task = null; lock (_locker) if (_tasks.Count > 0) { task = _tasks.Dequeue(); if (task == null) return; } if (task != null) { Console.WriteLine ("Performing task: " + task); Thread.Sleep (1000); // simulate work... } else _wh.WaitOne(); // No more tasks - look for a signal } } } To ensure thread safety, we used a lock to protect access to the Queue<string> drove. Nosotros also explicitly closed the wait handle in our Dispose method, since we could potentially create and destroy many instances of this class within the life of the application.

Hither's a master method to exam the queue:

static void Master() { using (ProducerConsumerQueue q = new ProducerConsumerQueue()) { q.EnqueueTask ("Hello"); for (int i = 0; i < ten; i++) q.EnqueueTask ("Say " + i); q.EnqueueTask ("Goodbye!"); } // Exiting the using statement calls q'southward Dispose method, which // enqueues a nil job and waits until the consumer finishes. } Performing task: Hello Performing chore: Say one Performing task: Say 2 Performing chore: Say 3 ... ... Performing task: Say nine Farewell!

Framework four.0 provides a new class called BlockingCollection<T> that implements the functionality of a producer/consumer queue.

Our manually written producer/consumer queue is still valuable — not only to illustrate AutoResetEvent and thread prophylactic, but also equally a basis for more than sophisticated structures. For instance, if we wanted a bounded blocking queue (limiting the number of enqueued tasks) and also wanted to support counterfoil (and removal) of enqueued work items, our code would provide an fantabulous starting bespeak. We'll accept the producer/consume queue example further in our discussion of Wait and Pulse.

ManualResetEvent

A ManualResetEvent functions similar an ordinary gate. Calling Gear up opens the gate, allowing any number of threads calling WaitOne to be let through. Calling Reset closes the gate. Threads that call WaitOne on a closed gate will block; when the gate is side by side opened, they will be released all at once. Autonomously from these differences, a ManualResetEvent functions like an AutoResetEvent.

As with AutoResetEvent, you tin can construct a ManualResetEvent in two means:

var manual1 = new ManualResetEvent (false); var manual2 = new EventWaitHandle (false, EventResetMode.ManualReset);

From Framework 4.0, in that location's some other version of ManualResetEvent called ManualResetEventSlim. The latter is optimized for curt waiting times — with the ability to opt into spinning for a set number of iterations. It also has a more efficient managed implementation and allows a Await to be canceled via a CancellationToken. It cannot, however, be used for interprocess signaling. ManualResetEventSlim doesn't subclass WaitHandle; however, information technology exposes a WaitHandle belongings that returns a WaitHandle-based object when chosen (with the performance profile of a traditional wait handle).

A ManualResetEvent is useful in allowing ane thread to unblock many other threads. The contrary scenario is covered by CountdownEvent.

CountdownEvent

CountdownEvent lets you expect on more ane thread. The class is new to Framework iv.0 and has an efficient fully managed implementation.

If you're running on an earlier version of the .Cyberspace Framework, all is not lost! After on, we show how to write a CountdownEvent using Wait and Pulse.

To use CountdownEvent, instantiate the class with the number of threads or "counts" that you want to wait on:

var inaugural = new CountdownEvent (3); // Initialize with "count" of 3.

Calling Signal decrements the "count"; calling Wait blocks until the count goes downwards to zero. For instance:

static CountdownEvent _countdown = new CountdownEvent (iii); static void Main() { new Thread (SaySomething).Starting time ("I am thread 1"); new Thread (SaySomething).Start ("I am thread 2"); new Thread (SaySomething).Start ("I am thread 3"); _countdown.Wait(); // Blocks until Signal has been called three times Console.WriteLine ("All threads take finished speaking!"); } static void SaySomething (object thing) { Thread.Sleep (1000); Console.WriteLine (affair); _countdown.Signal(); } Problems for which CountdownEvent is effective can sometimes be solved more easily using the structured parallelism constructs that we'll cover in Role 5 (PLINQ and the Parallel class).

You tin reincrement a CountdownEvent's count by calling AddCount. Notwithstanding, if information technology has already reached zip, this throws an exception: y'all can't "unsignal" a CountdownEvent past calling AddCount. To avert the possibility of an exception beingness thrown, you tin can instead phone call TryAddCount, which returns false if the inaugural is zero.

To unsignal a countdown event, telephone call Reset: this both unsignals the construct and resets its count to the original value.

Like ManualResetEventSlim, CountdownEvent exposes a WaitHandle property for scenarios where some other form or method expects an object based on WaitHandle.

Creating a Cross-Procedure EventWaitHandle

EventWaitHandle'due south constructor allows a "named" EventWaitHandle to be created, capable of operating across multiple processes. The proper noun is simply a string, and information technology can exist any value that doesn't unintentionally conflict with someone else'southward! If the name is already in use on the estimator, you get a reference to the same underlying EventWaitHandle; otherwise, the operating system creates a new one. Here'due south an example:

EventWaitHandle wh = new EventWaitHandle (false, EventResetMode.AutoReset, "MyCompany.MyApp.SomeName");

If 2 applications each ran this code, they would exist able to signal each other: the await handle would work across all threads in both processes.

Expect Handles and the Thread Puddle

If your application has lots of threads that spend most of their time blocked on a expect handle, you can reduce the resource burden by calling ThreadPool.RegisterWaitForSingleObject. This method accepts a delegate that is executed when a wait handle is signaled. While it's waiting, it doesn't tie up a thread:

static ManualResetEvent _starter = new ManualResetEvent (imitation); public static void Principal() { RegisteredWaitHandle reg = ThreadPool.RegisterWaitForSingleObject (_starter, Go, "Some Data", -1, true); Thread.Sleep (5000); Console.WriteLine ("Signaling worker..."); _starter.Set(); Console.ReadLine(); reg.Unregister (_starter); // Make clean up when nosotros're done. } public static void Go (object data, bool timedOut) { Console.WriteLine ("Started - " + data); // Perform task... } (v second delay) Signaling worker... Started - Some Information

When the expect handle is signaled (or a timeout elapses), the delegate runs on a pooled thread.

In addition to the wait handle and consul, RegisterWaitForSingleObject accepts a "black box" object that information technology passes to your delegate method (rather like ParameterizedThreadStart), as well as a timeout in milliseconds (–1 meaning no timeout) and a boolean flag indicating whether the request is one-off rather than recurring.

RegisterWaitForSingleObject is peculiarly valuable in an application server that must handle many concurrent requests. Suppose you lot need to block on a ManualResetEvent and simply phone call WaitOne:

void AppServerMethod() { _wh.WaitOne(); // ... continue execution } If 100 clients chosen this method, 100 server threads would be tied upward for the elapsing of the blockage. Replacing _wh.WaitOne with RegisterWaitForSingleObject allows the method to return immediately, wasting no threads:

void AppServerMethod { RegisteredWaitHandle reg = ThreadPool.RegisterWaitForSingleObject (_wh, Resume, null, -1, true); ... } static void Resume (object data, bool timedOut) { // ... continue execution } The data object passed to Resume allows continuance of any transient data.

WaitAny, WaitAll, and SignalAndWait

In addition to the Set, WaitOne, and Reset methods, there are static methods on the WaitHandle grade to crack more complex synchronization basics. The WaitAny, WaitAll, and SignalAndWait methods perform signaling and waiting operations on multiple handles. The wait handles can be of differing types (including Mutex and Semphore, since these also derive from the abstract WaitHandle class). ManualResetEventSlim and CountdownEvent can also partake in these methods via their WaitHandle properties.

WaitAll and SignalAndWait accept a weird connection to the legacy COM architecture: these methods require that the caller be in a multithreaded apartment, the model least suitable for interoperability. The main thread of a WPF or Windows application, for example, is unable to interact with the clipboard in this mode. We'll hash out alternatives before long.

WaitHandle.WaitAny waits for any one of an array of wait handles; WaitHandle.WaitAll waits on all of the given handles, atomically. This means that if you expect on two AutoResetEvents:

-

WaitAnyvolition never end up "latching" both events. -

WaitAllwill never end up "latching" but i event.

SignalAndWait calls Gear up on one WaitHandle, and and so calls WaitOne on another WaitHandle. Later signaling the start handle, it will bound to the head of the queue in waiting on the 2d handle; this helps it succeed (although the operation is not truly atomic). You lot can think of this method equally "swapping" one point for another, and utilise it on a pair of EventWaitHandlesouthward to set up two threads to rendezvous or "run across" at the aforementioned point in time. Either AutoResetEvent or ManualResetEvent will practise the trick. The first thread executes the following:

WaitHandle.SignalAndWait (wh1, wh2);

whereas the 2d thread does the opposite:

WaitHandle.SignalAndWait (wh2, wh1);

Alternatives to WaitAll and SignalAndWait

WaitAll and SignalAndWait won't run in a single-threaded apartment. Fortunately, in that location are alternatives. In the instance of SignalAndWait, information technology's rare that you lot demand its queue-jumping semantics: in our rendezvous example, for instance, it would be valid simply to call Set on the offset wait handle, and and so WaitOne on the other, if the wait handles were used solely for that rendezvous. In The Barrier Course, nosotros'll explore even so another choice for implementing a thread rendezvous.

In the example of WaitAll, an culling in some situations is to utilize the Parallel class's Invoke method, which we'll embrace in Function five. (Nosotros'll likewise encompass Tasks and continuations, and encounter how TaskFactory'south ContinueWhenAny provides an alternative to WaitAny.)

In all other scenarios, the respond is to take the low-level approach that solves all signaling problems: Wait and Pulse.

Synchronization Contexts

An culling to locking manually is to lock declaratively. By deriving from ContextBoundObject and applying the Synchronization aspect, yous instruct the CLR to utilise locking automatically. For example:

using System; using Organisation.Threading; using System.Runtime.Remoting.Contexts; [Synchronization] public class AutoLock : ContextBoundObject { public void Demo() { Console.Write ("Start..."); Thread.Slumber (yard); // Nosotros can't be preempted hither Console.WriteLine ("end"); // cheers to automatic locking! } } public class Exam { public static void Main() { AutoLock safeInstance = new AutoLock(); new Thread (safeInstance.Demo).Start(); // Call the Demo new Thread (safeInstance.Demo).Start(); // method 3 times safeInstance.Demo(); // concurrently. } } Start... end Start... terminate Start... end

The CLR ensures that only one thread tin can execute code in safeInstance at a time. It does this past creating a single synchronizing object — and locking it around every telephone call to each of safeInstance's methods or properties. The scope of the lock — in this instance, the safeInstance object — is called a synchronization context.

So, how does this work? A clue is in the Synchronization aspect's namespace: Arrangement.Runtime.Remoting.Contexts. A ContextBoundObject can be idea of equally a "remote" object, significant all method calls are intercepted. To make this interception possible, when we instantiate AutoLock, the CLR actually returns a proxy — an object with the same methods and properties of an AutoLock object, which acts equally an intermediary. Information technology'due south via this intermediary that the automatic locking takes identify. Overall, the interception adds around a microsecond to each method phone call.

Automatic synchronization cannot exist used to protect static type members, nor classes not derived from ContextBoundObject (for case, a Windows Form).

The locking is practical internally in the same way. Yous might expect that the following case volition yield the same consequence equally the last:

[Synchronization] public class AutoLock : ContextBoundObject { public void Demo() { Console.Write ("Offset..."); Thread.Sleep (thousand); Panel.WriteLine ("end"); } public void Test() { new Thread (Demo).Kickoff(); new Thread (Demo).Start(); new Thread (Demo).Start(); Panel.ReadLine(); } public static void Principal() { new AutoLock().Exam(); } } (Notice that nosotros've sneaked in a Console.ReadLine statement). Because simply one thread can execute code at a time in an object of this class, the three new threads will remain blocked at the Demo method until the Exam method finishes — which requires the ReadLine to complete. Hence nosotros finish up with the same effect as earlier, only only later pressing the Enter key. This is a thread-prophylactic hammer almost big enough to forestall whatever useful multithreading within a class!

Farther, we haven't solved a trouble described earlier: if AutoLock were a collection class, for example, nosotros'd still require a lock around a argument such as the post-obit, bold it ran from another class:

if (safeInstance.Count > 0) safeInstance.RemoveAt (0);

unless this lawmaking's class was itself a synchronized ContextBoundObject!

A synchronization context tin can extend beyond the scope of a unmarried object. Past default, if a synchronized object is instantiated from within the code of another, both share the aforementioned context (in other words, ane big lock!) This behavior can be changed past specifying an integer flag in Synchronization aspect'due south constructor, using ane of the constants divers in the SynchronizationAttribute class:

| Abiding | Meaning |

|---|---|

| NOT_SUPPORTED | Equivalent to non using the Synchronized attribute |

| SUPPORTED | Joins the existing synchronization context if instantiated from another synchronized object, otherwise remains unsynchronized |

| REQUIRED (default) | Joins the existing synchronization context if instantiated from another synchronized object, otherwise creates a new context |

| REQUIRES_NEW | Ever creates a new synchronization context |

And so, if object of course SynchronizedA instantiates an object of form SynchronizedB, they'll exist given separate synchronization contexts if SynchronizedB is declared every bit follows:

[Synchronization (SynchronizationAttribute.REQUIRES_NEW)] public class SynchronizedB : ContextBoundObject { ... The bigger the scope of a synchronization context, the easier it is to manage, simply the less the opportunity for useful concurrency. At the other end of the scale, carve up synchronization contexts invite deadlocks. For example:

[Synchronization] public class Deadlock : ContextBoundObject { public DeadLock Other; public void Demo() { Thread.Sleep (1000); Other.Hello(); } void Hello() { Console.WriteLine ("hello"); } } public course Exam { static void Principal() { Deadlock dead1 = new Deadlock(); Deadlock dead2 = new Deadlock(); dead1.Other = dead2; dead2.Other = dead1; new Thread (dead1.Demo).Start(); dead2.Demo(); } } Because each instance of Deadlock is created inside Test — an unsynchronized class — each instance volition get its own synchronization context, and hence, its own lock. When the 2 objects telephone call upon each other, information technology doesn't take long for the deadlock to occur (1 2d, to be precise!) The trouble would exist particularly insidious if the Deadlock and Test classes were written by unlike programming teams. It may be unreasonable to expect those responsible for the Test class to be fifty-fifty aware of their transgression, permit alone know how to become virtually resolving it. This is in contrast to explicit locks, where deadlocks are usually more than obvious.

Reentrancy

A thread-safe method is sometimes called reentrant, because information technology can exist preempted office way through its execution, and and then called again on another thread without sick result. In a full general sense, the terms thread-safe and reentrant are considered either synonymous or closely related.

Reentrancy, however, has another more sinister connotation in automatic locking regimes. If the Synchronization attribute is applied with the reentrant argument true:

[Synchronization(true)]

and so the synchronization context'southward lock will be temporarily released when execution leaves the context. In the previous example, this would preclude the deadlock from occurring; apparently desirable. However, a side outcome is that during this interim, whatever thread is complimentary to call any method on the original object ("re-entering" the synchronization context) and unleashing the very complications of multithreading i is trying to avoid in the offset identify. This is the trouble of reentrancy.

Considering [Synchronization(true)] is applied at a class-level, this attribute turns every out-of-context method call made by the class into a Trojan for reentrancy.

While reentrancy can be unsafe, there are sometimes few other options. For instance, suppose one was to implement multithreading internally within a synchronized class, past delegating the logic to workers running objects in separate contexts. These workers may be unreasonably hindered in communicating with each other or the original object without reentrancy.

This highlights a cardinal weakness with automatic synchronization: the extensive scope over which locking is practical tin can really manufacture difficulties that may never have otherwise arisen. These difficulties — deadlocking, reentrancy, and emasculated concurrency — can make manual locking more palatable in anything other than unproblematic scenarios.

<< Part 1 Part 3 >>

Threading in C# is from Capacity 21 and 22 of C# 4.0 in a Nutshell.

© 2006-2014 Joseph Albahari, O'Reilly Media, Inc. All rights reserved

Source: https://www.albahari.com/threading/part2.aspx

Post a Comment for "How to End a Method and Then Call the Same Method Again"